Behavioral Health | Compliance

Compliance- AI Assistant for Clinical Documentation

Helping behavioral health clinicians reduce audit risk without disrupting how they document care.

Sole Product Designer | 0 -> 1 | Healthcare | 6 months

Context & Problem

What Made this Hard

Compliance benefits leadership, but clinicians carry the work.

Real-time pop-ups disrupt writing and reduce adoption.

AI suggestions must be explainable in a high-stakes domain.

The tool had to fit inside an existing documentation workflow.

Constraints

Regulatory risk: errors could expose the organization to audits.

Clinician trust: AI could not feel opaque or authoritative.

Workflow fit: No forced interruptions or new flows.

Technical constraints: Had to work within our existing sidebar and documentation system.

Goals

Reduce time spent manually checking notes for compliance.

Increase clinician confidence before submitting documentation

Lower audit risk without adding cognitive load.

Target: Improve confidence and reduce rework without increasing error risk.

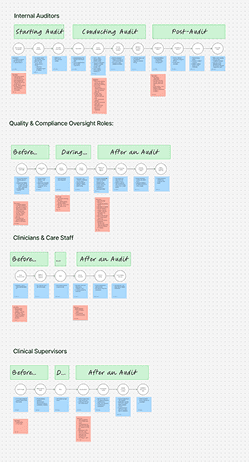

Understanding the Current Workflow

Before designing anything, I needed to understand how documentation and compliance actually worked day to day, across clinicians, compliance teams, and leadership.

I spent time with the people closest to the problem, observing how notes were written, reviewed, and corrected, and where breakdowns most often occurred.

Research activities included:

30+ interviews with clinicians, compliance, quality, and operational leaders

Shadowing internal audits and post-submission review processes

Mapping the end-to-end documentation workflow, from session to claim

Reviewing existing compliance tooling and manual review practices

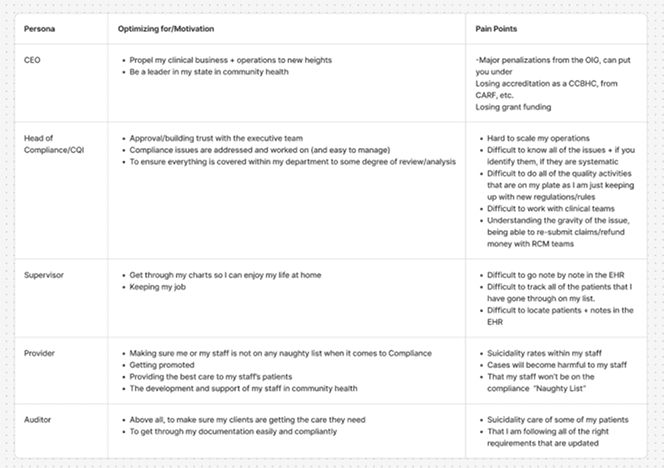

Small examples of persona needs analysis

Across roles, a consistent pattern emerged: compliance issues were usually discovered too late, after clinicians had already moved on. Fixing them required context switching, guesswork, and back-and-forth between teams.

Clinicians, in particular, were clear about what didn’t work. Interruptions during writing broke their focus and increased frustration. At the same time, simply flagging an issue without explanation created mistrust.

What they wanted instead was straightforward:

Feedback that appeared while the note was being written

Clear explanations of why something was flagged

Guidance on how to fix issues, without taking control away from them

These insights shaped both the timing of feedback and the tone of the experience, and became the foundation for how the AI would support, rather than interrupt, the clinician’s workflow.

Designing the Human-AI Split

From the beginning, this project was less about what the AI could do and more about what it should do.

In a high-stakes clinical setting, full automation would have undermined trust. Clinicians needed to remain responsible for clinical judgment and final documentation, while the AI handled the repetitive, rule-based work that makes compliance stressful and time-consuming.

I designed the system around a clear division of responsibility.

The AI’s role

Continuously review documentation in the background against payer and regulatory criteria

Identify potential compliance issues as the note was being written

Surface issues using plain language, explaining what was missing and why it mattered

Suggest next steps without making changes automatically

The clinician’s role

Write notes in their own voice, without interruption

Review flagged items at their own pace

Decide how to address suggestions or intentionally leave content unchanged

Retain full control over the final submitted note

This separation was intentional. By keeping the AI in a supporting role and making its reasoning visible, the system reduced cognitive load without replacing clinical judgment.

The result was an experience that felt assistive rather than prescriptive: the AI handled complexity in the background, while clinicians stayed in control of their work.

Key Design Decisions

Throughout the project, I made a series of deliberate tradeoffs to balance compliance rigor with clinician adoption. These decisions prioritized trust, clarity, and workflow fit over strict enforcement.

Avoid forced interruptions

Early research showed that real-time pop-ups and alerts disrupted clinicians’ writing and were quickly dismissed. Instead of interrupting the documentation flow, I integrated the experience into our sidebar, that updated continuously in the background and could be reviewed when the clinician was ready. This preserved focus while still making compliance issues visible.

Explain the “why,” not just the issue

Flagging a problem without context created distrust. Each compliance issue is paired with a plain-language explanation describing what was missing and why it mattered. When possible, suggestions included guidance on how to resolve the issue, helping clinicians move forward with confidence.

Resist over-automation

Rather than automatically correcting documentation, the system proposed changes for clinicians to review and edit themselves. This decision preserved clinical voice and judgment, and avoided the feeling that the AI was making assumptions about patient care.

These choices consistently traded speed and enforcement for clarity and confidence. In a domain where errors carry real consequences, this balance proved essential to adoption.

Final Experience

The final solution was designed to fit seamlessly into clinicians’ existing documentation workflow, providing compliance feedback without changing how notes were written.

The experience unfolds in a few simple steps.

1. Write as usual

Clinicians write their progress notes in the primary editor, using their own language and structure. The writing experience remains familiar and distraction-free.

2. Background compliance review

As the note is written, the AI continuously reviews content in the background against payer and regulatory requirements. No alerts or interruptions appear while the clinician is focused on writing.

3. Sidebar feedback appears

A dedicated sidebar surfaces the note’s current compliance status. Issues are grouped by priority, making it easy to distinguish between critical requirements and lower-priority suggestions.

4. Review and resolve issues

Clinicians can review flagged items at their own pace. Each issue includes a short explanation of what’s missing and why it matters, along with guidance on how to address it. Clinicians decide how to respond, edit the note, or intentionally leave content unchanged.

5. Confident submission

Before submitting, clinicians can quickly confirm that all critical compliance issues have been addressed. This final review step provides reassurance that the note meets requirements without requiring manual cross-checking.

Together, these steps create a calm, predictable workflow where compliance checks happen continuously in the background, allowing clinicians to focus on care while reducing uncertainty and rework.

Outcomes

The compliance assistant launched in alpha and was tested with clinicians and operational teams across behavioral health organizations.

While the product was still early, initial signals showed that the approach resonated with both clinicians and compliance stakeholders.

Clinician impact

Clinicians reported feeling more confident submitting notes, knowing compliance issues were being checked continuously

Reduced second-guessing and less time spent manually reviewing notes before submission

The sidebar format felt supportive rather than disruptive, improving overall adoption

Operational impact

Compliance and quality teams spent less time flagging issues after submission

Fewer back-and-forth questions between clinicians and reviewers about missing requirements

Improved visibility into common compliance gaps across notes

Product outcome

Successfully shipped an end-to-end compliance workflow from 0 → 1

Validated a human-in-the-loop approach to AI in a high-stakes domain

Established a foundation for expanding rule coverage and deeper analytics in future iterations

Because the tool focused on clarity and trust rather than strict enforcement, adoption signals were strong even at the alpha stage, setting the groundwork for continued iteration and scale.

Reflections

This project reinforced that designing AI for high-stakes workflows is less about intelligence and more about trust.

Explainability builds confidence

In compliance-heavy environments, clinicians cared less about how “smart” the system was and more about whether it could clearly explain its reasoning. Simple language and visible logic did more to build trust than automation alone.

Calm workflows matter more than speed

While faster documentation was a goal, reducing anxiety and uncertainty proved just as important. Giving clinicians space to review feedback on their own terms led to better adoption than forcing immediate action.

Human control is non-negotiable

Keeping clinicians firmly in control of final documentation preserved clinical voice and judgment. Designing the AI as a supportive layer, rather than a decision-maker, made the system feel collaborative instead of prescriptive.

Constraints sharpen design decisions

Regulatory risk, workflow limitations, and technical constraints forced clearer prioritization. Rather than being limiting, these constraints helped define what the product needed to be and what it intentionally should not do.

Overall, this work shaped how I think about thoughtful AI: systems that handle complexity quietly in the background, respect people’s time, and help them finish their work with confidence so they can move on with their day.